Learn methods online!

The Gevirtz Graduate School of Education at UC Santa Barbara will host a low-cost methods training workshops for early career faculty, post-doctoral scholars, and graduate students. The purpose of these workshops is to offer rigorous preparation of advanced statistical methods and evaluation that are required to answer both common and complex research questions in the social sciences.

An Introduction to Latent Class Analysis (LCA)Workshop (Two days)

Instructor: Dr. Karen Nylund-Gibson

This workshop will provide an introduction to latent class analysis (LCA) and its application in Mplus. Topics will include a brief overview of mixture models, including latent class, latent profile and latent transition analysis, with the primary focus on the specification and interpretation of latent class analysis. The workshop will also cover the inclusion of auxiliary variables, covariates and distal outcomes, into LCA models using modern methods including the BCH and 3-step methods. Modeling extensions of the basic LCA model will be provided, with examples and Mplus syntax. The workshop will be applied in nature–that is, specifying, testing, and interpreting LCA model output in Mplus. Ideally, participants should have an understanding of multiple regression and some exposure/familiarity with exploratory factor analysis, SEM techniques, and categorical data analysis.

To benefit the most from this workshop, participants should have their own copy of Mplus installed on their computer. Also, we will be using MplusAutomation in R.

Instructor: Dr. Karen Nylund-Gibson

This workshop will provide an introduction to latent class analysis (LCA) and its application in Mplus. Topics will include a brief overview of mixture models, including latent class, latent profile and latent transition analysis, with the primary focus on the specification and interpretation of latent class analysis. The workshop will also cover the inclusion of auxiliary variables, covariates and distal outcomes, into LCA models using modern methods including the BCH and 3-step methods. Modeling extensions of the basic LCA model will be provided, with examples and Mplus syntax. The workshop will be applied in nature–that is, specifying, testing, and interpreting LCA model output in Mplus. Ideally, participants should have an understanding of multiple regression and some exposure/familiarity with exploratory factor analysis, SEM techniques, and categorical data analysis.

To benefit the most from this workshop, participants should have their own copy of Mplus installed on their computer. Also, we will be using MplusAutomation in R.

Data Visualization Workshop (One day)

Instructor: Dr. Tarek Azzam

The careful planning of visual tools will be the focus of this workshop. Part of our responsibility as professionals is to turn information into knowledge. Data complexity can often obscure main findings, or hinder a true understanding of program impact. So how do we make information more accessible to different audiences? Often this is done by visually displaying data and information, but this approach, if not done carefully, can also lead to confusion. We will explore the underlying principles behind effective information displays. These are principles that can applied in almost any area of evaluation or research, and draw on the work of Edward Tufte, and Stephen Few to illustrate the breadth and depth of their applications. In addition to providing tips to improve most data displays, we will examine the core factors that make them effective. We will discuss the use of the common graphical tools, and delve deeper into other graphical displays that allow the user to visually interact with the data. Topics covered will include : interactive visual displays, GIS, qualitative and quantitative data display, and crowdsourcing visualizations. This workshop is designed as an introduction to the topics, and no prior training is required.

Instructor: Dr. Tarek Azzam

The careful planning of visual tools will be the focus of this workshop. Part of our responsibility as professionals is to turn information into knowledge. Data complexity can often obscure main findings, or hinder a true understanding of program impact. So how do we make information more accessible to different audiences? Often this is done by visually displaying data and information, but this approach, if not done carefully, can also lead to confusion. We will explore the underlying principles behind effective information displays. These are principles that can applied in almost any area of evaluation or research, and draw on the work of Edward Tufte, and Stephen Few to illustrate the breadth and depth of their applications. In addition to providing tips to improve most data displays, we will examine the core factors that make them effective. We will discuss the use of the common graphical tools, and delve deeper into other graphical displays that allow the user to visually interact with the data. Topics covered will include : interactive visual displays, GIS, qualitative and quantitative data display, and crowdsourcing visualizations. This workshop is designed as an introduction to the topics, and no prior training is required.

Introduction to Evaluation Workshop (One day)

Instructor: Dr. Tarek Azzam

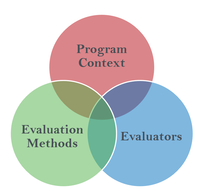

Evaluation practice comprises the integration of three main facets:

These components are interrelated and constantly evolving, yet need to be balanced for to produce an effective program evaluation. This workshop is designed to cover how these three facets connect when designing an evaluation that is rigorous, feasible, and useful. This workshop will provide participants with a broad understanding of program evaluation and cover topics such as:

Instructor: Dr. Tarek Azzam

Evaluation practice comprises the integration of three main facets:

- Program Context (e.g., stakeholders, politics, maturity of the program, complexity of the program, etc.)

- Evaluators (e.g., level of expertise, theoretical perspectives, competency, etc.)

- Evaluation Methods (e.g., type of design, interviews, surveys, case studies, RCTs, etc.)

These components are interrelated and constantly evolving, yet need to be balanced for to produce an effective program evaluation. This workshop is designed to cover how these three facets connect when designing an evaluation that is rigorous, feasible, and useful. This workshop will provide participants with a broad understanding of program evaluation and cover topics such as:

- Identifying the differences and similarities between research and evaluation

- Designing a program logic model

- Developing evaluation questions

- Choosing appropriate evaluation designs for various evaluation questions

- Distinguishing evaluation’s purposes and evaluators’ roles and activities

- Determining effective communication and reporting methods for disseminating evaluation information

- Developing evaluations that have a high likelihood of being utilized for decision making

Workshop Format

Each workshop will be a mix of lecture and lab time . All workshop materials will be provided digitally via a link prior to the start of the workshop. Lecture slides, relevant data-sets, code, etc. will be provided. All times are Pacific Standard Time (PST).

Sample class format

9:00-10:30: Workshop Instruction

10:30-10:45: Break

10:45-12:30 Workshop Instruction

12:30-1:30: Lunch

1:30-3:00: Workshop Instruction

3:00-3:15: Break

3:30-5:00: Workshop Instruction

Sample class format

9:00-10:30: Workshop Instruction

10:30-10:45: Break

10:45-12:30 Workshop Instruction

12:30-1:30: Lunch

1:30-3:00: Workshop Instruction

3:00-3:15: Break

3:30-5:00: Workshop Instruction

The Instructors

Dr. Karen Nylund-GibsonDr. Karen Nylund-Gibson is an Associate Professor of Quantitative Research Methodology in the Department of Education. She has been at UCSB since 2009. Prior to joining the department, she was a Postdoctoral Fellow at the Department of Mental Health at Johns Hopkins University. She earned her Ph.D. at UCLA, working with Bengt Muthen. Her research focus is on latent variable models, specifically mixture models and she has published many articles and book chapters on developments, best practices, and applications of latent class analysis, latent transition analysis, and growth mixture modeling.

|

Dr. Tarek AzzamDr. Tarek Azzam is an Associate Professor in the Department of Education. Dr. Azzam’s research focuses on developing new methods suited for real-world evaluations. These methods attempt to address some of the logistical, political, and technical challenges that evaluators commonly face in practice. His work aims to improve the rigor and credibility of evaluations and increase its potential impact on programs and policies. His research work involves studying the impact of politics on the evaluation process, and the integration of new technologies and resources, such as crowdsourcing, to develop new evaluation specific methodologies.

|